Exploring human vs machine learning (one blogpost at a time)

15 July 2019 | by Piotr Migdał | 5 min read

The field of Artificial Intelligence started with inspiration, and ambition, to imitate our human cognition. We create things in our image and, well, they make similar mistakes:

The biggest lie of every parent, pet owner and deep learning engineer:

“I’ve never shown it that, it must have learned it by itself!”

— a friend, Jan Rzymkowski, on FB; translation mine

While each particular machine learning algorithm comes with its own artifacts and limitations, some issues are much broader. In fact, there are limitations of any learning process, machine or human alike. I think that it’s worth investigating these, as there is a lot of room for cross-pollination between machine learning and cognitive science.

Speaking as a psychologist, I’m flabbergasted by claims that the decisions of algorithms are opaque while the decisions of people are transparent. I’ve spent half my life at it and I still have limited success understanding human decisions. - Jean-François Bonnefon's tweet

In this blog post, I will explain why I consider it an important, and fruitful, problem. My main point is to share my motivation for my other blog posts (existing and planned) exploring concrete aspects of human vs machine cognition.

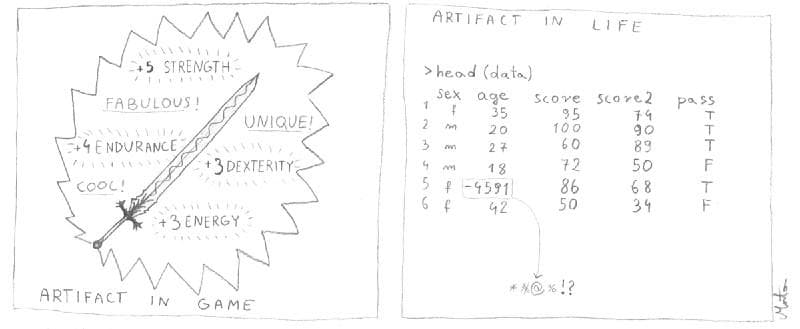

Artifact in game vs in (data scientist) life by MartaCzC (posted with permission)

Motivation

We can explore learning patterns, cognitive biases, and logical fallacies through the prism of machine learning. Moreover, biological systems keep inspiring progress of ML (evolutionary algorithm, artificial neural networks, attention). Or sometimes it turns out that deviating from biological inspirations does better (e.g. ReLU instead of sigmoid activations in deep learning).

Some of these issues are theoretical, good for neuroscientists and machine learning researchers. Others are crucial also for any practitioner — to make sure that we reduce (rather than amplify) social biases, improve safety, etc.

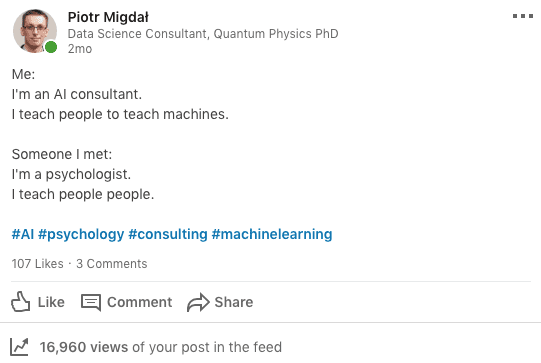

Except for scientific & philosophical curiosity, and ethical & safety concerns, I have also a more down-to-earth motivation — I make my living teaching machine learning.

“I teach people to teach machines” — my LinkedIn post

It’s handy to have useful analogies and metaphors, showing that machine learning is “reasoning on steroids”, rather than some kind of sorcery.

Machine learning

If you need a general introduction, I wrote Data science intro for math/phys background in 2016. To my surprise, it is still up-to-date and useful to people coming from other backgrounds as well.

Each computer model is different

Contrary to public reception, “AI” is not a single method. Each machine learning model is different, with pros and cons, and there is not even hope for creating “the best one” for all data (so-called no free lunch theorem). Computer cognition is different than our own. And varies from algorithm to algorithm. Querying a relational database with SQL is different than asking Alexa.

In my opinion, it is useful to compare various approaches, no matter if there are deterministic scripts, statistical models, “shallow” machine learning, or deep learning. All in all, people used the word “AI” for many things (“AI” for opponents in computer games is usually deterministic scripts).

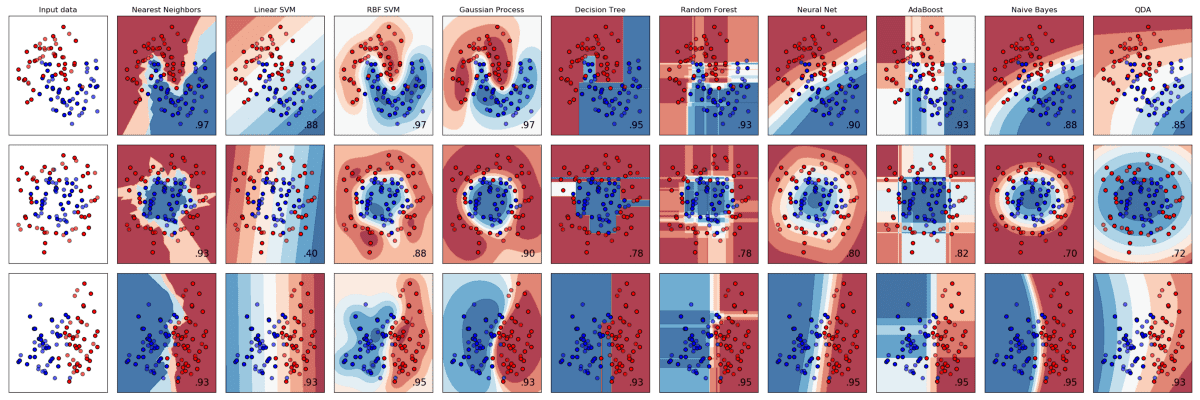

Even when we look at classic machine learning classifiers, they differ both with their predictions and the way they arrive at such. Let's see Scikit-learn classifier comparison: nearest neighbors, linear support vector machine (SVM), radial-basis function SVM, Gaussian process, a decision tree, Random Forest, etc.

So:

- Which algorithm is the best?

- Which algorithm creates predictions matching your own judgement?

There is a work-in-progress Which Machine Learning algorithm are you? by Katarzyna Kańska and me. For a longer list of interactive tools, see Interactive Machine Learning, Deep Learning and Statistics websites. And if you like to draw your own classifiers, see this livelossplot Jupyter Notebook.

Even deep learning is simple

If you don’t know deep learning yet, it is a bunch of simple mathematics operations (addition, multiplication, maximal value). Learning about deep learning made me think that it is not deep learning doing magic… it’s our cognition that is based on heuristics, that are just good enough.

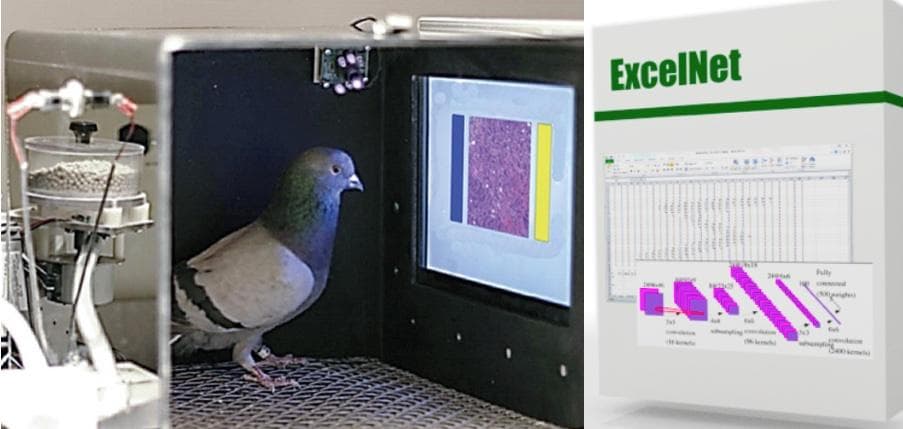

From flesh to machines: pigeon learn to detect cancer tissue from images (video, paper summary) or MNIST recognition using spreadsheets, without any macros.

Don’t take me wrong: mastering deep learning is hard and takes time (as of any specialized activity). But if you want to start playing with that, and you know Python, you can write your first network today, vide:

- Learning Deep Learning with Keras (2017)

- Keras or PyTorch as your first deep learning framework (2018)

- Thinking in Tensors, writing in PyTorch (work in progress)

If you think that “oh, recognizing images can be done by machines, but feelings, sentience, spirituality, and deep motivations are inherently human”, I recommend reading Consciousness Explained by Daniel Dennett (and in general the philosophy of mind camp). It does the job at de-enchanting consciousness itself.

Let’s learn from mistakes

To err is human... and machine, alike.

If we get the correct answer to our question, we are happy… but that’s it.

Everyone knows that dragons don’t exist. But while this simplistic formulation may satisfy the layman, it does not suffice for the scientific mind. […] The brilliant Cerebron, attacking the problem analytically, discovered three distinct kinds of dragon: the mythical, the chimerical, and the purely hypothetical. They were all, one might say, nonexistent, but each non-existed in an entirely different way. — Stanisław Lem, The Cyberiad

Usually, we can be right in one way but mistaken in infinitely many ways. And each of these reveals something about the cognitive process. Think about: Freudian slips — “when you say one thing, but mean your mother”. In a similar vein, word vectors can carry connotations, including undesirable ones (vide How to make a racist AI without really trying). I recommend taking the Implicit Bias Test to see what are your unconscious biases for/against people of a given gender, age or ethnicity.

People learn a lot about human neuroscience from brain regions being damages, as in the famous book The Man Who Mistook His Wife for a Hat by Oliver Sacks. With machine learning models, so far there is no ethics commission forbidding random experiments on them. Thanks to that I run Deep Frankenstein: dissecting and sewing artificial neural networks, at Brainhack (no pun intended!).

Posts

So, without further ado, let’s jump to the content:

Current

- king — man + woman is queen; but why? — touch the subject of analogies and cognitive metaphors with word embeddings such as word2vec or GloVe

- Does AI have a dirty mind, too? with Marek K. Cichy — racy adversarial examples, or: seemingly NSFW illusions fooling nude picture detectors

- Dreams, Drugs and ConvNets — seeing patterns, in vivo & in silica; or: artifacts generated by artificial neural networks and by psychedelics

Intend to write

But there are more coming!

- Overfitting — rote learning, superstitions, nipples and conspiracy theories

- Scalar fallacy — binary is bad, scalars are not enough

- AI is not an Abrahamic God — image classification is a reality, AGI and transhumanism are still sci-fi

- Trypophobia — the fear of holes (based on this), based on a project with Grzegorz Uriasz and Artur Puzio and a subsequent one with Piotr Woźnicki and Michał Kuźba

- YOLO?! Object (mis)classification and safety

All in all, Pan European Game Information is a checklist. We will cover most of the points.

Thanks

I am grateful to Rafał Jakubanis and Katarzyna Kańska for numerous remarks on the draft.